Show Article Summary

Show Full Article

Feel free to use the below menu to help navigate to a particular tip which interests you:

‘It’s a marathon, not a sprint’ is a phrase commonly used to describe how a slower and steadier approach will help you to conserve energy, allowing you to complete the whole journey by taking gradual steps forward. The idea behind this is to help you absorb the finer details instead of having to frantically try to cross the finish line, leaving you feeling burnt out and lacking in motivation to take the next step on another journey.

But what if you can take the qualities of going the distance while combining this with sudden bursts of changes to amplify organic visibility?

By no means does this post replace a long-term strategic approach. Instead, by clearly defining your goals, adopting these techniques cannot only help to edge you closer to those goals at a faster rate, but it can ensure you are being productive on the right things.

So hopefully by reading and implementing the below points, you will be on your way. These points are aimed towards anyone, but as the nature of the post is gaining better coverage within a shorter timeframe, it is especially relevant to those sites who already have a strong domain authority and link profile. There are a few reasons for this:

- Links still influence performance:

Despite counter statements indicating that links are either massively devalued compared to a few years ago, or in some cases, perceived as an unnecessary part of the Google machine (this has probably come from those ‘SEO is Dead’ folk); there is such overwhelming evidence to suggest that they are integral in determining the order of search results.

In this post, I share how you can test and witness its influence.

So based on this evidence, if a site already has an established domain, which covers core-ranking factors, it will mean the changes suggested below will have a better chance at succeeding at a faster rate.

- Link building takes time:

If you are already familiar with the link building process, then you will know that nowadays, it takes longer than spinning some content and pushing a button to submit them to unsavoury sites.

But if you are less familiar with the concept, acquiring high-quality links takes time! And in fact, even if you were to attempt to fast track the process, this in itself can have a detrimental effect on performance.

A little caveat: for sites which have a stronger link profile, applying a few links can make all the difference, allowing you to move the average keyword group positions for a page from 12th to 5th.

And we know that by increasing ranking positions, the CTR should improve – here’s a chart to help illustrate this:

2014 study conducted by Advanced Web Ranking

This is simply an average across sectors and query types (there are strong fluctuations between these and as shown below, there are a few ways that you can influence the CTR), but it helps to give a good indication on the potential CTR that you can receive.

Identify your current visibility – know what’s realistic & provides value

By paying particular attention to those related page groups which are either placed in the top 10 or between 11th or 20th, subtle movements while in these positions can help you benefit from a better CTR in the short term.

Once deciding which pages to select, extra weight should be given towards those pages which already have links directed to them.

To do this, SEMrush & SEO Monitor are both amazing tools to help pinpoint where those golden nuggets lie.

My personal choice for analysing backlink activity is Majestic SEO, while Screaming Frog is an amazing scraping tool. By merging the strengths of these tools, we are able to understand a few quick insights:

- Based on their existing presence, current link profile & content relevancy, determine which pages and sections are realistic targets.

- Assess the influence of pages and sections that provide goal completions. This is important in helping to assign value to a session or unique page view.

- The number of pages that facilitate a visitor’s user intent. For example, those seeking to know more about what luxury holidays are available across Europe, yet are undecided on a specific location will want to know more information such as weather, things to do, costs and options before they make further decisions.

- Whether the bounce rate across different devices is more pronounced. This can help identify whether additional user testing is required to establish why this is happening.

Here are a few steps I use while using this process:

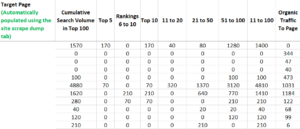

- Using the export files for SEMrush, Screaming Frog (include HTML and 200 pages), & Majestic SEO (only the URL and root domains), add these into tabs, similar to the below:

![]()

- The GA tab I have uses SEOtools For Excel by tapping into the API of Google Analytics. It plots out the range of metrics, including sessions, bounce rate, goal completions, exit rate, unique pageviews, accessibility score, bounce rate & sessions across mobile, desktop & tablet devices.

- Then by applying Vlook-up & SUMIFs formulas, in the 1st tab ‘Key Pages’, this will populate the URL, ranking ranges, traffic, bounce rate, goals. Here is a preview with the target page URL’s removed:

Using the above image, it presents the total available monthly searches per page. Once this is combined with metrics such as, organic traffic, Meta titles, goal completions and the total number of inbound links towards the page, it is possible to accurately establish what is influencing this presence.

This is particularly useful during competitor analysis because it can help to isolate the reason why they have been able to acquire this visibility. There are over 200 ranking factors – so obviously, it will be impossible to exactly determine why they have this presence. But by including key factors, such as:

- Inbound links

- Internal linking

- Meta tags

- Content relevancy/depth

A correlation can be made, meaning if your work is more aligned with what they are undertaking, you will be more confident that by replicating this activity, it can bear fruit.

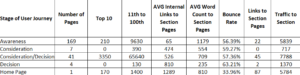

Finally, in the second tab, ‘insights’, here is a preview of one table:

By tagging the user journey stage against each page (unfortunately this is a manual job), this can help you assess how your site content is distributed.

Importantly, this can provide additional insights. For example, whether new pages need creating or more targeted work needs to be applied to help improve positions for those, which are already ranking in more advanced positions.

1. Refresh Your Content to Reengage Bots

Sellyourjamjar.co.uk Analysis

To help illustrate the strength of this approach, I have selected a site which is in a relatively competitive vertical.

Interestingly, initially, this site was chosen to demonstrate the positive long-term effects subtle changes can have on your organic exposure.

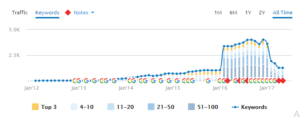

However, in the last few months, it is clear that their organic presence has suffered. See the below SEMrush graphic:

Prior to this decrease, it is important to note that this site experienced sustainable growth since January 2014. They occupied the top positions for the ‘sell your car’ & ‘buy your car’ market for at least three years.

For many of you who are involved in SEO, this would have seemed perplexing, particularly when their techniques appeared to contradict long held beliefs on what influences organic visibility. These include:

- 90% of their link profile was dominated with link directories.

- They were using high volumes of keyword focussed anchor text within their internal links.

- Thin content held within poorly designed templates

These anomalies tickled by curiosity, so I went away and conducted a mini-analysis. Here is a summary of those findings:

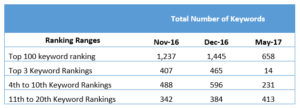

- On September 2014, the https://www.sellyourjamjar.co.uk/value-my-car/ page was only ranking for one keyword. Then, following anchor text changes to their internal links to reveal ‘sell my car’ in November 2014, the number of keywords ranking for the page improved to 20.

By January 2015, that had risen to 39 keywords.

- The content or page template did not change until between the period May and September 2015. We cannot precisely isolate when these changes had been applied, but according to SEMrush data, it is clear that their presence had a further increase to 77 keywords ranking in the top 20 positions.

From this, we can infer that the content was added in June or July 2015.

3. Using Majestic’s backlink history comparison tool, it is clear that there was one link built in October 2014 and May 2015.

Links and adding content to influence rankings is certainly not a ground-breaking discovery. The significance of these findings is that the initial improvement in organic visibility occurred following the changes within the anchor text of the internal links.

Granted, the link which was built in October 2014 could have contributed towards this, but it is also interesting to note, that following changes to the navigation and internal linking in November 2016, from this:

To this:

As the table presents, between November and December, there was a slight improvement in organic visibility.

Then in February 2017, the subsequent drop in exposure followed and it continually fell to the present month, May 2017. Notice the significant drop in top three ranking positions for May 2017:

To help better understand the reason behind this drastic shift in performance, according to Search Engine Land, there was an unconfirmed Google Algorithm update, which indicated that the cause is due to spam linking activity.

Folks in the “black hat” SEO community seem to be noticing this and complaining that their tactics are not working as well.

If these suspicions are true, it becomes increasingly apparent that spammy linking activity has started to negatively affect their performance.

To Summarise the Key Insights from Discovering Your Own Technique:

- Despite helping to influence performance for a number of years, spam link building tactics are not sustainable and will inevitability be penalised by search algorithms.

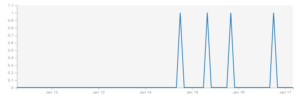

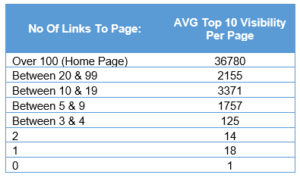

It was apparent that following a link being built in October 2014, the number of ranking keywords significantly increased. You can rightly argue that this was a long time ago and today, links may not have the same influence. But following a brief analysis into a luxury travel provider Elegant Resorts, it is clear that the number of links towards a page does positively influence top 10 ranking visibility:

By establishing a relationship between when a link was built to a page and the impact it had on site visibility, 3 answers to difficult questions can be proposed:

- Whether that link(s) had a direct impact on performance over a sustained period of time?

- How long did it take to see an improvement in rankings for that page? Tip: consider using a competitor’s site, which has a similar link profile to your site. By following this logic, your site has a better chance of following the analysed sites’ success

- What was the link in question and how can I better align my activity to similar types of links? For example:

- Was it a link within the content. If yes, where?

- What was the anchor text and does the page’s distribution seem balanced?

- What is the link profile & presence of the site – is it similar to your site, or is it a much stronger site which can distort what you can realistically achieve?

This analysis was undertaken by:

- Compiling SEMrush ranking data and grouping pages into ranking ranges such as the top 10, 11th to 20th and 21st to 50th (similar to the ranking range image displayed above)

- Using Majestic SEO data which isolated the number of unique linking root domains per page.

By combining these two sources, I was able to evaluate the extent to which the number of links positively contributed towards the average visibility of the top 10 ranking positions.

This was achieved by dividing the total number of pages which are within that ranking range against the cumulative top 10 monthly search volume for that page.

The table shows a strong correlation favouring more links towards a page. Whereas having zero links directed to a page offers a compelling insight. To put it simply, 170 pages had zero links towards them. Out of those 170 pages, there were three keywords ranking in the top 10. Each had 60 monthly searches, meaning the total cumulative searches was 180.

By dividing the total number of pages (170) with the total monthly search volume (180), it presented – on average – one available monthly search per page for a top 10 result. Minimal presence, indeed!

A caveat: quality links will always outweigh quantity. So do not feel an excessive number of links (over 10) per page is a requirement. In fact, excess inbound links to a page can result in diminishing returns, causing wasted time & effort.

A key point is that links remain a central part of the algorithm, and in my opinion, they are likely to remain that way for the near future.

2. By including keyword rich anchor text within internal links, it does appear to affect ranking changes. But, and this should go without saying it, don’t needlessly stuff your content with repetitive exact match anchor text links. Instead, give the search engines a natural reference point which will help guide them to what the page is about.

This is only one element of undertaking internal linking. Neil Patel presents a comprehensive overview of internal linking here.

3. The navigational change also improved performance. However, it would be interesting to have understood the sustained impact a mega menu would have had if the February penalty did not occur.

4. Unsurprisingly, considering search engine’s index content, following the content changes in June 2015, it was clear that further ranking improvements occurred.

This means creating rich, semantically relevant content (as demonstrated earlier in the post), not only adds value but also, it has the capability of producing results within a short time-frame.

Of course, this isn’t an exhaustive list by any means. But I hope this has given you a better idea on which techniques to employ, making that all important difference to growing your organic presence. Feel free to share your own ideas and if you attempt these tactics, I would love to hear your successes or failures.

Click this link ‘View Summary’ button at the top of the post for a quick recap.

People are impatient. This is especially true for those who have either devoted their time or paid someone else to enhance their SEO presence. Across many circles, we are told that SEO gains take time, or they may not arrive at all. This combination of thoughts can understandably lead to everyone’s dirty word in business nowadays, prostration. To help get you out of this mode, these points can help you get to work on the right things:

- Link’s still matter. It is undeniable that sites with stronger link profiles outrank their weaker companions. Therefore, for those sites who already have a strong profile, there is a greater chance that subtle changes can catapult you to greater success.

- Identify your existing search presence for your site, site categories and specific pages. SEMrush or SEOmonitor are two great examples for establishing this. By identifying pages, which are already ranking strongly, it can help you to prioritise your efforts, which can enable you to realise quicker benefits.

- Refreshing content: this can involve updating meta titles by harnessing the power of the RankBrain update, updating historic blog blogs which involves adding fresh insights or improving its optimisation; or lastly, deindex your site. This last tip may sound extreme, but I have had evidence of it working.

- If you have gone through site migrations and changing pages, ensure redirects are properly mapped to the correct location & if there are multiple redirect hops, consider reducing the length of the chain.

- As you know, search engine algorithms are in constant flux. The effect of these changes can change over time, so it will be worthwhile carrying out your own tests to gauge the impact certain tactics are having. Using a combination of ‘The Way Back Machine’, SEMrush & Majestic, it is possible to isolate what onpage or offpage changes occurred within a month to determine if it correlated with an impact in performance, which was also sustained over a longer period of time.

16,006 Comments

Neva Haydon

August 21, 2020 - 11:49 amYOU NEED QUALITY VISITORS for your: denverburke.com

My name is Neva Haydon, and I’m a Web Traffic Specialist. I can get:

– visitors from search engines

– visitors from social media

– visitors from any country you want

– very low bounce rate & long visit duration

CLAIM YOUR 24 HOURS FREE TEST => https://bit.ly/3h750yC

Gusella Abbot Petit

August 23, 2020 - 9:29 pmHey! It is like you understand my mind! You seem to know a lot about this, just like you wrote the book in it or something. I think that you can do with some images to drive the content home a bit, besides that, this is informative blog. A wonderful read. I will definitely return again. Gusella Abbot Petit

DavidTuh

August 23, 2020 - 9:56 pmhttp://whatsapplanding.is-great.net/ – chat bot for whatsapp

erotik

August 25, 2020 - 8:59 amHallo, es ist immer toll, andere Menschen bei meiner Suche durch die ganze Welt zu sehen. Ich schätze die Zeit sehr, die es hätte brauchen müssen, um diesen großartigen Artikel zusammenzustellen. Prost Martha Arney Utter

DamonPiern

August 25, 2020 - 6:25 pmLive Chat for Websites is a professional chat for websites, developed to drive online sales.

Site conversion could be increased up to 5 times, average checkout total – up to 2 times, thanks to the effective easy-to-use features

https://all-links.online/1Z

erotik izle

August 26, 2020 - 8:17 amHallo mein Freund! Ich möchte sagen, dass dieser Artikel großartig ist, schön geschrieben und fast alle wichtigen Infos enthält. Ich würde gerne mehr Beiträge wie diesen sehen. Magdalen Laurens Erbes

Iş makinası operatörü ne demek?

August 31, 2020 - 11:23 amTemel imalat işlemleri nelerdir? firmaları veya ofisler iyi çalışmaktadır.

Yaşadığımız yer cnc makineleri alanında ilerlemektedir.

Rickymug

August 31, 2020 - 12:35 pmhttps://www.instagram.com/seotop.best/

fason kaynak işi

September 14, 2020 - 5:15 pmfason kaynak işleri yapılır

cialis

September 24, 2020 - 4:57 amBesides the sudden blood pressure crash seen with nitrates used in hypertension, another situation a buildup in the concentrations of sildenafil in the body can occur when some drugs interfere with sildenafil s metabolism, such as some common antibiotics and antifungal medications.

Interestingly, smoking cigarettes may impact the onset of action associated with Xanax, as well as the significance of its effect.

Examples of erythromycin, claritromycin, azithromycin.

AmyKnill

September 26, 2020 - 5:43 pm10 sildenafil

AmyKnill

September 27, 2020 - 11:37 amdapoxetine 60

KiaKnill

September 27, 2020 - 12:21 pmhydroxychloroquine coupon

Hassan Tunn

September 27, 2020 - 3:13 pmI WILL FIND POTENTIAL CUSTOMERS FOR YOU

I’m talking about a better promotion method than all that exists on the market right now, even better than email marketing.

Just like you received this message from me, this is exactly how you can promote your business or product.

CLAIM YOUR TEST PERIOD => https://bit.ly/3hdwfaC

LisaKnill

September 27, 2020 - 3:22 pmpropranolol 1 mg

WimKnill

September 27, 2020 - 7:40 pmlasix 40 mg tabs

WimKnill

September 27, 2020 - 9:00 pmbuy amoxil online

KiaKnill

September 27, 2020 - 9:42 pmdisulfiram 500

LisaKnill

September 27, 2020 - 10:42 pmhydroxychloroquine 25 mg

LisaKnill

September 28, 2020 - 5:24 amcanada generic strattera

TeoKnill

September 28, 2020 - 6:37 amcan you buy strattera online

WimKnill

September 29, 2020 - 6:34 amrobaxin without prescription

WimKnill

September 29, 2020 - 10:29 ambuy abilify cheap

LisaKnill

September 29, 2020 - 4:25 pmbuy made in usa cialis online

TeoKnill

September 29, 2020 - 6:29 pmlevitra drugstore

AmyKnill

September 29, 2020 - 8:27 pmlevitra south africa prices

KiaKnill

September 30, 2020 - 12:11 pmbuspar pill 5 mg

TeoKnill

October 1, 2020 - 2:33 ambuy vardenafil 40 mg

LisaKnill

October 1, 2020 - 5:40 pmdesyrel 50 mg for sleep

AmyKnill

October 1, 2020 - 9:32 pmerythromycin 250 mg coupon

AmyKnill

October 1, 2020 - 11:27 pmgeneric sildenafil paypal

WimKnill

October 2, 2020 - 7:41 amgeneric buspar tablet

TeoKnill

October 2, 2020 - 12:11 pmarimidex pills

AmyKnill

October 2, 2020 - 4:10 pmbuy 1000 viagra

KiaKnill

October 3, 2020 - 1:34 amhow to get paxil

LisaKnill

October 3, 2020 - 2:02 amcan you buy robaxin over the counter in canada

KiaKnill

October 3, 2020 - 5:58 amhow to get dapoxetine

TeoKnill

October 3, 2020 - 11:46 amcialis uk pharmacy

AmyKnill

October 4, 2020 - 2:29 amlevitra 20mg

WimKnill

October 4, 2020 - 4:06 amdipyridamole 25mg

KiaKnill

October 5, 2020 - 3:22 amfluoxetine capsules

LisaKnill

October 5, 2020 - 4:40 amprazosin tablet

WimKnill

October 5, 2020 - 7:08 ambuy kamagra oral jelly

TeoKnill

October 5, 2020 - 12:29 pmkamagra sildenafil

AmyKnill

October 5, 2020 - 2:02 pmbuy kamagra in india

AmyKnill

October 5, 2020 - 9:49 pmbuy innopran

WimKnill

October 7, 2020 - 7:55 amonline pharmacy cheap cialis

KiaKnill

October 7, 2020 - 8:10 amlopressor 100 mg

TeoKnill

October 7, 2020 - 2:44 pm100mg viagra price

LisaKnill

October 7, 2020 - 10:39 pmno prescription singulair

TeoKnill

October 8, 2020 - 12:36 aminnopran generic

AustraliaEmbog

October 8, 2020 - 10:13 amsevere symptoms of thrush in hiv patients

canadian pharmacy

AmyKnill

October 8, 2020 - 1:50 pmplaquenil 100mg

AmyKnill

October 9, 2020 - 7:51 amhow to order amoxicillin online

KiaKnill

October 9, 2020 - 12:47 pmprazosin hcl for sleep

TeoKnill

October 9, 2020 - 10:01 pmbuy furosemide online australia

LisaKnill

October 9, 2020 - 11:56 pmcan i buy viagra over the counter canada

AmyKnill

October 10, 2020 - 1:46 amonline sildenafil india

KiaKnill

October 10, 2020 - 5:53 ampaxil canada

KiaKnill

October 10, 2020 - 5:55 amcitalopram price uk

WimKnill

October 10, 2020 - 8:17 amcitalopram coupon

TeoKnill

October 10, 2020 - 12:07 pmnexium over the counter best price

Dissertation Online

October 10, 2020 - 5:13 pmdo my math homework

LisaKnill

October 11, 2020 - 7:16 amcymbalta brand name coupon

Premium Assignments

October 11, 2020 - 7:37 amproper essay writing

Personal Loans

October 11, 2020 - 11:21 amunsecured personal loans

cialis 5mg

October 11, 2020 - 1:45 pmCialis coupon https://jocialisrl.com/ cialis pill

Free Paper Writer

October 11, 2020 - 4:11 pmhelp with my homework online

Quick Loans

October 11, 2020 - 4:54 pmguaranteed loan

AmyKnill

October 11, 2020 - 9:38 pmwhere can i get viagra tablets

Cash Loan

October 12, 2020 - 11:24 amloans for people with poor credit

Custom Essay

October 12, 2020 - 12:15 pmargument essay

Speedycash

October 12, 2020 - 1:19 pmloans after bankruptcy discharge

best online loans for bad credit

October 12, 2020 - 3:31 pmGet a loan online best online loans for bad credit no credit check loans online

online loan application

October 12, 2020 - 4:16 pmSmall loans online https://loanus24online.com/ payday loan online

1 hour payday loans direct lender

October 13, 2020 - 2:53 amPayday loans direct lender https://payday4loans.com payday

loans las vegas

Premium Assignments

October 13, 2020 - 7:24 amessayonlinestore promo code

KiaKnill

October 13, 2020 - 11:39 amaustralia viagra cost

quick personal loans

October 13, 2020 - 12:37 pmPersonal loans for fair credit https://personal4usloans.com/

best personal loans for fair credit

Quick Loan

October 13, 2020 - 2:00 pminstallment loan guaranteed approval

small personal loans

October 13, 2020 - 3:12 pmPersonal loans for people with bad credit https://loanspersonal365.com unsecured personal loans guaranteed approval

Getting A Loan

October 13, 2020 - 5:58 pmgreenline loans

payday loans colorado springs

October 13, 2020 - 6:03 pmPayday loans no credit check https://loans365payday.com payday loans in nc

KiaKnill

October 13, 2020 - 6:24 pmcialis 20 mg coupon

KiaKnill

October 14, 2020 - 6:11 amhow do i get viagra online

Essay Writing Online

October 14, 2020 - 7:58 amterm papers writing service

AmyKnill

October 14, 2020 - 8:32 amcialis where can i buy

AmyKnill

October 14, 2020 - 8:34 am35 buspar

LisaKnill

October 14, 2020 - 10:38 amalbenza mexico

Write Essay For Me

October 14, 2020 - 11:07 ama research paper

AmyKnill

October 14, 2020 - 2:08 pmgeneric levitra cost canada

AmyKnill

October 15, 2020 - 2:56 amhydroxychloroquine 100mg

KiaKnill

October 15, 2020 - 4:40 amparoxetine online

Cash Advance

October 15, 2020 - 9:04 ampersonal loans for bad credit

Pay To Write Essays

October 15, 2020 - 10:08 amchemistry assignment help

negarapoker bandarq terpercaya

October 15, 2020 - 10:58 amSEO Tips to Achieve Your Goals Quickly – Denver Burke negarapoker situs bandarq https://www.komiqu.com/redirect_top.php?adsID=5&url=http%3A%2F%2Fforo.unionfansub.com%2Fmember.php%3Faction%3Dprofile%26uid%3D145678 | negarapoker dominoqq

online

LisaKnill

October 15, 2020 - 11:17 ammalegra 100 from india

how to take viagra

October 15, 2020 - 4:36 pmRoman viagra https://viashoprx.com/ how to use viagra

Online Essay Writer

October 15, 2020 - 5:43 pmhomework help online

Essay Writings

October 15, 2020 - 11:12 pmdefinition essay on beauty

Spotloan

October 16, 2020 - 4:01 amadvance payday loans

LisaKnill

October 16, 2020 - 4:08 amwhere to get paxil

KiaKnill

October 16, 2020 - 5:25 amgeneric viagra online pharmacy

Direct Lenders

October 16, 2020 - 6:07 ampayday loan direct

English Essay Writer

October 16, 2020 - 3:52 pmbuy a college paper

cialis buy

October 16, 2020 - 5:01 pmTadalafil for sale http://cialmenshoprx.com/ cialis 20mg

canadian pharmacy cialis

October 16, 2020 - 5:23 pmCialis price http://acialaarx.com cialis buy

Online Loans

October 17, 2020 - 11:26 ambad credit installment loans

AmyKnill

October 17, 2020 - 12:42 pmcheap kamagra 100mg tablets

best canadian pharcharmy online

October 17, 2020 - 1:19 pmcanadian pharmacy https://canadiantrypharmacy.com – no prescription pharmacy

AmyKnill

October 17, 2020 - 3:48 pmnorvasc 10 mg tablet

how long does viagra last

October 17, 2020 - 5:49 pmGeneric viagra how long does viagra last womens viagra

LisaKnill

October 17, 2020 - 6:37 pmeffexor cost 225 mg

viagra without prescription

October 17, 2020 - 7:17 pmBuy generic 100mg viagra online http://genericrxxx.com where to buy viagra

Loans

October 18, 2020 - 3:48 ampayday loans without direct deposit

Custom Essays

October 18, 2020 - 9:02 amwriting a commentary essay

KiaKnill

October 18, 2020 - 11:06 amlithium 10 mg

generic cialis

October 18, 2020 - 2:44 pmNicely put. Appreciate it.

http://canadian1pharmacy.com generic viagra

Paydayloan

October 18, 2020 - 5:14 pmcash advances

Assignment Helper

October 18, 2020 - 8:47 pmcollege stress essay

canada rx pharmacy

October 18, 2020 - 9:52 pmcanadian pharmacy http://viaciabox.com – canadian pharmacy canadian pharmacy

Payday

October 19, 2020 - 6:40 ampayday loans in las vegas

LisaKnill

October 19, 2020 - 7:27 amallopurinol 10 mg

Buy A Essay

October 19, 2020 - 9:32 amoriginal research papers

Cialis caja fuerte barata

October 19, 2020 - 11:34 amThanks for the article post.Really thank you! Great.cialis caja fuerte barata

AmyKnill

October 19, 2020 - 1:36 pmordering viagra

KiaKnill

October 19, 2020 - 2:38 pmpyridium medication over the counter

viagra without a doctor prescription usa

October 19, 2020 - 3:16 pmKetones the cousins of the powerful paint thinner acetone are very acidic.

http://bestviagrx.com/ Ketones the cousins of the powerful paint thinner acetone

are very acidic.

over the counter viagra

October 19, 2020 - 7:33 pmObviously if you are still unwell…Norman Swan: And there’s even a name for

it, it’s the ‘heart sink’ patient. over the counter viagra Obviously

if you are still unwell…Norman Swan: And there’s even a name for it, it’s the

‘heart sink’ patient.

viagra vs cialis

October 19, 2020 - 7:38 pmWomen age 30 or older can have an HPV test along with the Pap test.

viagra vs cialis Women age 30

or older can have an HPV test along with the Pap test.

Is Homework Helpful

October 20, 2020 - 3:47 amassignment writer

AmyKnill

October 20, 2020 - 4:08 amchloroquine 300

Homework Assignments

October 20, 2020 - 4:23 amwriting a cover letter

LisaKnill

October 20, 2020 - 5:41 amcialis in india

kamagra tablets for sale uk

October 20, 2020 - 6:05 amRegards. Lots of advice., kamagra curacao https://kamagrahome.com kamagra gel oral

Online Payday Loans

October 20, 2020 - 6:37 ambest payday loans direct lender

RobertVew

October 20, 2020 - 10:05 amerectile helper cheap ed drugs erectile issues after 40

Loan

October 20, 2020 - 5:54 pmrise loans online

viagra generic

October 20, 2020 - 9:28 pmI even had to have the bellman take me to our room in the wheelchair the hotel kept in the lobby to accommodate

BROKEN DOWN, ruined old people, which is just how I felt.

buygenericviarga.com

I even had to have the bellman take me to our room in the wheelchair the

hotel kept in the lobby to accommodate BROKEN DOWN, ruined old people, which

is just how I felt.

viagra price comparison

October 20, 2020 - 9:52 pmComparing the two classes of antidepressants, tricyclics slow the transit and seratonin reuptake inhibitors such as Paxil and Prozac increase

motility. viagra price comparison Comparing

the two classes of antidepressants, tricyclics slow the transit and seratonin reuptake inhibitors such as Paxil and Prozac increase motility.

viagra for sale

October 20, 2020 - 10:52 pmMedical treatments: First, see your doctor to identify an underlying condition. viagra for sale Medical treatments:

First, see your doctor to identify an underlying condition.

Writers Essay

October 20, 2020 - 11:09 pmbusiness term paper

BrainNenue

October 21, 2020 - 12:50 amgeneric viagra scams otc generic viagra how much does one tablet of generic viagra cost.

Pay Day Loan

October 21, 2020 - 1:23 amapply for loans online

LisaKnill

October 21, 2020 - 2:15 amserevent coupon

AmyKnill

October 21, 2020 - 6:58 amhow to buy viagra online usa

KiaKnill

October 21, 2020 - 9:58 amdiovan 160 mg tablets

BrainNenue

October 21, 2020 - 1:03 pmgeneric viagra pills genericviagra2o generic viagra for sale canada.

kamagra gel

October 21, 2020 - 6:02 pmhigh blood pressure medication names kamagra in thailandia https://www.goldkamagra.com – kamagra 100mg

otc viagra

October 21, 2020 - 8:42 pmI got careless about taking my calcium supplements and the problem started to flare up again,

so now I make sure to take my calcium and I never get careless about taking them anymore.

otc viagra I got careless

about taking my calcium supplements and the problem started to flare up again,

so now I make sure to take my calcium and I never get careless about taking them anymore.

order viagra online

October 21, 2020 - 9:47 pmThe specialist who knows your case can give more accurate information about your particular outlook, and how well your type and stage of

cancer is likely to respond to treatment. ltdviagragogo.com The specialist who knows your

case can give more accurate information about your particular outlook, and how well

your type and stage of cancer is likely to respond to treatment.

Payday Loan Online

October 21, 2020 - 10:16 pmcheapest car insurance

AlbertWax

October 22, 2020 - 12:42 amgeneric viagra cost genericviagra2o.com where to get low cost generic viagra.

Personal Loans

October 22, 2020 - 2:29 ampayday loan store

LisaKnill

October 22, 2020 - 3:28 amtadalafil uk

AlbertWax

October 22, 2020 - 11:36 amcheapest 200 mg generic viagra genericviagra2o.com compare generic viagra prices.

Custom Essays

October 22, 2020 - 1:20 pmbest refinance rates

Premium Assignments

October 22, 2020 - 2:49 pmmortgage rate

EdwardNoick

October 22, 2020 - 4:52 pmcanadian generic viagra genericviagra2o legit site to buy generic viagra.

Buy An Essay Paper

October 22, 2020 - 7:11 pmmortgage rate

Steverof

October 22, 2020 - 11:28 pmgeneric viagra trial pack online without a doctor’s prescription teva generic viagra 2017 generic viagra 2016.

Craigwag

October 22, 2020 - 11:42 pmbest place to buy generic viagra reviews genericviagra2o what does generic viagra cost.

AmyKnill

October 23, 2020 - 12:42 amwhere to buy pyridium in canada

Steverof

October 23, 2020 - 4:55 ambuy online generic viagra vbb generic viagra is generic viagra available over the counter.

Craigwag

October 23, 2020 - 6:54 amviagra generic soft genericviagra2o canadian drugs generic viagra.

LisaKnill

October 23, 2020 - 8:50 amzestoretic 5 mg

Steverof

October 23, 2020 - 10:30 amcan you get generic viagra genericviagra2o generic viagra dosage recommendations onw hour before.

Viagra

October 23, 2020 - 11:32 amThank you for your blog post.Really thank you! Awesome.https://viagraonline-sale21.com/

Easy Payday Loan

October 23, 2020 - 1:48 pmviking insurance company

pay someone to do your homework

October 23, 2020 - 2:46 pmNice Article thanks for your valuable information, we work 24*7 for your convenience, so you don’t need to wait pay someone to do your homework

DavidTed

October 23, 2020 - 4:24 pmgeneric brand cialis and viagra generic viagra online canada news about generic viagra.

GeorgeLic

October 23, 2020 - 4:28 pmwhy dont generic viagra work like the name brand viagra generic availability buying generic viagra online reviews.

viagra for men

October 23, 2020 - 5:23 pmRumination is another symptom that may resemble vomiting.

viagra for men Rumination is another symptom that may resemble vomiting.

Help with financial accounting homework

October 23, 2020 - 5:51 pmNice Article thanks for your valuable information, we work 24*7 for your convenience, so you don’t need to wait help with financial accounting homework

payday loans online

October 23, 2020 - 7:01 pmhttp://www.loansonline1.com payday loan online

GeorgeLic

October 23, 2020 - 10:23 pmgeneric female viagra pills over the counter walmart pharmacy genericviagra2o generic viagra sale.

Third Grade Homework

October 24, 2020 - 1:49 ambest refinance interest rates today

AmyKnill

October 24, 2020 - 1:56 amceftin 300 mg

GeorgeLic

October 24, 2020 - 4:27 amwhere can i buy cheap generic viagra online generic viagra 100 generic viagra be available.

DavidTed

October 24, 2020 - 4:30 amcialis viagra cheap generic genericviagra2o what will teva viagra generic cost.

Payday Loans

October 24, 2020 - 10:46 amdebt consolidation loan

GeorgeLic

October 24, 2020 - 11:14 amgeneric viagra in cape coral florida is generic viagra as good generic viagra from canada.

AmyKnill

October 24, 2020 - 4:04 pmeffexor 37 5 mg

Homework Charts

October 24, 2020 - 4:22 pmconclusion on a research paper

DavidTed

October 24, 2020 - 4:30 pmwhen will viagra become generic? generic viagra tablets generic viagra cost.

GeorgeLic

October 24, 2020 - 7:26 pmgeneric viagra online without a prescription genericviagra2o.com local pharmacy generic viagra.

kamagra

October 24, 2020 - 8:50 pmGreat tips. Kudos., home pharma kamagra http://www.kamagrapolo.com kamagra uk next day

erotik

October 25, 2020 - 12:43 amIf you want to use the photo it would also be good to check with the artist beforehand in case it is subject to copyright. Best wishes. Aaren Reggis Sela

sikis izle

October 25, 2020 - 1:50 amIf you want to use the photo it would also be good to check with the artist beforehand in case it is subject to copyright. Best wishes. Aaren Reggis Sela

LisaKnill

October 25, 2020 - 2:58 amventolin pharmacy

DavidTed

October 25, 2020 - 3:32 amwhere to order generic viagra female generic viagra is viagra generic now?.

GeorgeLic

October 25, 2020 - 4:26 amdark blue generic viagra in india genericviagra2o cheap viagra generic india.

geico

October 25, 2020 - 7:10 ampersonal auto insurance

KiaKnill

October 25, 2020 - 7:19 amviagra otc uk

home loan login

October 25, 2020 - 5:02 pmindependent mortgage

mortgage 90

October 25, 2020 - 7:53 pmchatiw

viagra without doctor prescription

October 26, 2020 - 12:22 amhttps://www.withoutvisit.com viagra without a doctor prescription

LisaKnill

October 26, 2020 - 3:43 amviagra uk

asgenviagria.com

October 26, 2020 - 1:19 pmIf pregnancy does not occur, the corpus luteum withers and dies, usually around day 22 in a 28-day cycle.

https://asgenviagria.com alternative

to viagra

thesis statement helper

October 26, 2020 - 4:36 pmaccounting assignment help

loans

October 26, 2020 - 5:17 pmhttp://www.loansonline1.com loans online

LisaKnill

October 26, 2020 - 11:21 pmwhere to get generic levitra

Vodoacromainon

October 27, 2020 - 2:34 amzithromax iv

acyclovir pill

October 27, 2020 - 4:07 amacyclovir pill https://www.herpessymptomsinmen.org/productacyclovir/

AmyKnill

October 27, 2020 - 4:30 ammetformin 80 mg

KiaKnill

October 27, 2020 - 11:54 amfemale viagra pill price in india

order viagra generic

October 27, 2020 - 8:04 pmhttps://viagrabun.com – generic viagra

viagra prescription

October 27, 2020 - 9:30 pmviagra prescription https://buszcentrum.com/

tadalafil 40 mg

October 27, 2020 - 9:33 pmhttps://cialis20walmart.com cialis packungsgröße

Online Loans

October 28, 2020 - 2:04 amlending com

home loan bank

October 28, 2020 - 2:54 ammutual of omaha life insurance

KiaKnill

October 28, 2020 - 4:53 ambuy citalopram online uk

Buying An Essay

October 28, 2020 - 6:08 amessay competitions for college students

state auto insurance

October 28, 2020 - 7:48 amauto insurance instant quotes comparison

female viagra

October 28, 2020 - 11:38 amSo when the spleen gets enlarged, it just mops up all of the platelets within the spleen and can also

decrease the platelet count of our patients with CLL.

female viagra sildenafil vs viagra

car insurance cost

October 28, 2020 - 11:51 amcentury 21 auto insurance

Ralphswino

October 28, 2020 - 1:39 pmcialis coupon walgreens groupon

https://cialistak.com/

dosage strengths of cialis

kamagra 100mg tablets side effects

October 28, 2020 - 3:02 pmkamagra 100 chewable polo

kamagra kopen afhalen amsterdam

kamagra 100mg oral jelly sildenafil reviews

cialis price

October 28, 2020 - 3:15 pmwalmart price for cialis 20mg

buy generic viagra and cialis online canada

cialis soft tabs information

buy viagra

October 28, 2020 - 4:16 pmhttp://withoutdct.com – buy viagra

Life Experience Degrees

October 28, 2020 - 4:19 pmPretty! This has been a really wonderful article. Thanks for providing

these details.

Life Experience Degree

October 28, 2020 - 4:23 pmThis is very fascinating, You are an overly professional blogger. I’ve joined your rss feed and look ahead to searching for extra of your fantastic post. Also, I have shared your website in my social networks!

generic viagra usa

October 28, 2020 - 4:25 pmviagra prices walmart canada

female viagra pills online india

female viagra walmart over the counter

Ralphswino

October 28, 2020 - 4:49 pmbest generic cialis pills price

https://cialgen.com/

cost for cialis 20mg

buy cialis

October 28, 2020 - 5:14 pmhttp://cialiswlmrt.com – cialis

Robertunpal

October 28, 2020 - 5:58 pmviagra natural

https://viagrabon.com/

viagra feminino em farmacia

kamagra forum pl

October 28, 2020 - 6:14 pmkamagra direct

sildenafil and dapoxetine tablets super kamagra

kamagra oral jelly amazon

cialis for bph

October 28, 2020 - 6:23 pmcialis generic sold in usa

dapoxetine 60mg cialis 100mg

cialis 20mg coupon

viagra professional

October 28, 2020 - 7:31 pmgeneric viagra available in us pharmacy

generic viagra in india

viagra generic availability sildenafil 100mg

GlennErype

October 28, 2020 - 7:50 pmkamagra jelly

https://kamagrarex.com/

kamagra 100mg tablets use

Ralphswino

October 28, 2020 - 7:56 pmcost of cialis and viagra

https://cialgen.com/

viagra and cialis comparison

Robertunpal

October 28, 2020 - 9:05 pmviagra naturali erboristeria

https://viagraofc.com/

viagra professional 100 mg reviews

kamagra oral jelly

October 28, 2020 - 9:26 pmkamagra 100mg oral jelly suppliers

the sleep store kamagra

kamagra 100mg chewable tablets india

cialis recensioni

October 28, 2020 - 9:30 pmcialis 5mg tablets

price of cialis at walmart pharmacy

cialis 5 mg 6 tablets cost

order viagra online

October 28, 2020 - 10:38 pmviagra 25 mg price cvs

viagra dosage chart

viagra prices in india

where to buy chloroquine coronavirus

October 29, 2020 - 12:01 amwhere to buy chloroquine coronavirus https://www.herpessymptomsinmen.org/where-to-buy-hydroxychloroquine/

Robertunpal

October 29, 2020 - 12:11 amviagra generic costs

https://viagaratas.com/

viagra generico preço curitiba

cialis medication

October 29, 2020 - 12:36 amcialis side effects dangers or levitra vs

cialis side effects dangers or levitra

generic cialis available in usa

viagra great river ed center

October 29, 2020 - 1:45 amviagra generico espaГ±a online

viagra prices at walmart pharmacy

female viagra customer reviews generic

Ralphswino

October 29, 2020 - 2:12 amside effects viagra cialis levitra

viagra or cialis

cost of cialis or viagra

Robertunpal

October 29, 2020 - 3:21 amviagra prices 2016

https://viagraofc.com/

acquisto viagra generico in italia

cialis recensioni

October 29, 2020 - 3:46 amblack male actor in cialis commercial

walmart pharmacy cialis price

cialis online cheapest prices

kamagra oral jelly 100mg sildenafil citrate

October 29, 2020 - 3:51 amkamagra bestellen rotterdam

kamagra 100 chewable tablet einnahme

kamagra jelly india

viagra pills for sale

October 29, 2020 - 4:54 amviagra price drop canada

viagra generic price at cvs pharmacies

viagra black woman commercial

Ralphswino

October 29, 2020 - 5:19 amcialis generic 20mg price shop

https://cialistak.com/

cialis 5 mg generico prezzo in farmacia

buy generic cialis online

October 29, 2020 - 6:54 am100 generic cialis lowest price

viagra or cialis or levitra which is better

extra super cialis combitic global

kamagra 100mg tablets for sale

October 29, 2020 - 7:04 amkamagra oral jelly customer reviews

kamagra bezorgen amsterdam

kamagra oral gel

viagra canada

October 29, 2020 - 8:02 amviagra 50 mg como tomar

is there a generic viagra or cialis

brand viagra overnight

Robertunpal

October 29, 2020 - 9:38 amdose of viagra for men

cvs viagra

generic viagra 20mg equivalent to viagra

cialis uses

October 29, 2020 - 10:03 amcialis 20 mg dosage review

cialis commercial song 2012

cialis 10 mg tadalafil filmtabletten

kamagra oral jelly india

October 29, 2020 - 10:18 amkamagra 100mg oral jelly suppliers

kamagra oral jelly usa next day shipping uk

twoja kamagra pl forum

LisaKnill

October 29, 2020 - 10:50 amcarafate enema

https://buybuyviamen.com/

October 29, 2020 - 10:53 amMicroglia do not mount much of an immune response in the brain. how does viagra work (https://buybuyviamen.com/)

instant natural viagra

viagra for men online

October 29, 2020 - 11:11 amwalmart generic viagra 100mg price

viagra for women in india

female viagra pill commercial

Ralphswino

October 29, 2020 - 11:37 amhow long does it take a 5 mg cialis to work

https://cialistak.com/

generic cialis canada online

GlennErype

October 29, 2020 - 11:54 amkamagra chewable 100 mg reviews

https://kamajel.com/

kamagra oral jelly in thailand

Robertunpal

October 29, 2020 - 12:45 pmwhat is generic viagra called in spain

https://viagraofc.com/

buy viagra online usa

bumndej cialis

October 29, 2020 - 1:11 pm5mg cialis daily reviews

buy generic cialis online india

price of cialis vs viagra

kamagra shop erfahrung

October 29, 2020 - 1:31 pmkamagra vs kamagra gold

kamagra gold 100mg reviews

kamagra 100mg oral jelly in india

viagra professional

October 29, 2020 - 2:22 pmviagra coupon cvs

viagra commercial script

viagra vs cialis vs levitra bagus mana

Ralphswino

October 29, 2020 - 2:49 pmcialis tablets 20mg price in india

https://cialgen.com/

cialis super active plus en mexico

GlennErype

October 29, 2020 - 3:10 pmkamagra oral jelly ohne wirkung

https://kamagrarex.com/

kamagra 100 chewable review

Robertunpal

October 29, 2020 - 3:59 pmviagra precisa de receita

https://viagaratas.com/

viagra caseiro feminino

best generic cialis

October 29, 2020 - 4:26 pmgeneric cialis us store

cialis dosage recommendations

cialis coupon codes

dlp store kamagra

October 29, 2020 - 4:50 pmkamagra oral jelly review

kamagra oral jelly usa next day shipping

kamagra oral jelly how to use

buy viagra online

October 29, 2020 - 5:37 pmfemale viagra pranks

viagra generic price at cvs pharmacy

viagra precisa de receita 2015

Ralphswino

October 29, 2020 - 6:04 pmcialis 5 mg generico prezzo in farmacia

order cialis online cheap

when generic cialis coming out

AmyKnill

October 29, 2020 - 6:37 pmadvair asthma

Loans

October 29, 2020 - 6:39 pmdirect lenders installment loans

Robertunpal

October 29, 2020 - 7:13 pmlong term side effects of viagra use

https://viagraofc.com/

cost of generic viagra in india

cialis dose

October 29, 2020 - 7:40 pmcialis dosage

how much is cialis 5 mg at walmart

costco cialis coupon

kamagra chewable 100 mg reviews

October 29, 2020 - 8:07 pmkamagra 100mg oral jelly review

kamagra shop erfahrungen 2015

kamagra us website

viagra for sale

October 29, 2020 - 8:50 pmviagra 100mg side effects

effectiveness of cialis vs viagra vs levitra

quando o viagra feminino chega ao brasil

GlennErype

October 29, 2020 - 9:46 pmkamagra oral jelly viagra

https://kamagratel.com/

kamagra bestellen nederland

AmyKnill

October 29, 2020 - 10:14 pmhow much is orlistat in australia

Robertunpal

October 29, 2020 - 10:27 pmcompare viagra versus cialis

viagra without doctor prescription

100 mg viagra side effects

buy cialis generic

October 29, 2020 - 10:54 pmgeneric cialis super active information

cialis generic tadalafil india

levitra vs cialis vs viagra cost

beSwettycot

October 29, 2020 - 11:00 pmviagra for men price

effectiveness of cialis vs viagra vs levitra vs kamagra

October 29, 2020 - 11:25 pmkamagra store in nyc

kamagra oral jelly kaufen forum

kamagra oral jelly review

viagra sale

October 30, 2020 - 12:04 amvardenafil vs. viagra vs. cialis

youtube viagra commercial 2015 location

cost of generic viagra bangkok

Ralphswino

October 30, 2020 - 12:31 amwoman in cialis commercial

https://cialgen.com/

cost of cialis versus generic

LisaKnill

October 30, 2020 - 12:52 ambiaxin prescription

cmfg life ins

October 30, 2020 - 1:23 amwriters online

cialis best price

October 30, 2020 - 2:08 amcialis dose recommendations vs viagra

webmd drug cialis side effects

walgreens cost for cialis 20 mg tablets

kamagra oral jelly 5gm sildenafil

October 30, 2020 - 2:43 amkamagra oral jelly uses

erfahrungsbericht kamagra oral jelly wirkung

kamagra shop deutschland erfahrung

KiaKnill

October 30, 2020 - 3:08 ambuy feldene gel

how to take viagra for maximum effect

October 30, 2020 - 3:17 amprices for viagra at walmart

is there a generic replacement for viagra

viagra dose and the elderly

cialis canadian pharmacy

October 30, 2020 - 5:18 amcvs price for cialis 5mg

price for cialis at walmart

price of cialis 5 mg at walmart

reduslim

October 30, 2020 - 5:30 amreduslim

kamagra 100mg 7 tablets

October 30, 2020 - 5:55 amkamagra store reviews

kamagra jelly

kamagra 100 chewable

viagra without prescription

October 30, 2020 - 6:24 ampra comprar viagra precisa de receita medica

cost of generic viagra in india

viagra prices usa pharmacies

GlennErype

October 30, 2020 - 7:31 amkamagra oral jelly uses

https://kamagratel.com/

kamagra plus forum

cialis overdose loncialis

October 30, 2020 - 8:25 amcialis generico precio en colombia

cialis generico preço no brasil

generic brand for cialis 5mg daily cialis

chloroquine antimalarial

October 30, 2020 - 9:04 amchloroquine antimalarial https://azhydroxychloroquine.com/

kamagra oral jelly gГјnstig kaufen paypal

October 30, 2020 - 9:07 amkamagra oral jelly user reviews

kamagra vs kamagra gold

kamagra london reviews

buy real viagra online

October 30, 2020 - 9:32 amviagra commercial actress

viagra vs cialis vs levitra cost comparison

generic viagra walmart cost

Ralphswino

October 30, 2020 - 9:59 amcialis price with prescription

https://cialgen.com/

extra super cialis uk

GlennErype

October 30, 2020 - 10:43 amkamagra 100 oral jelly how to use

kamagra oral jelly india price

kamagra customer reviews

cialis tadalafil

October 30, 2020 - 11:33 amside effects of cialis in men

cialis and viagra dosage

black man cialis commercial

kamagra bestellen

October 30, 2020 - 12:19 pmkamagra kopen in de winkel utrecht

kamagra oral jelly in india

kamagra 100mg oral jelly

buy real viagra online

October 30, 2020 - 12:39 pmviagra super active 100mg pills

generic viagra sildenafil citrate reviews

cialis generic levitra viagra

Ralphswino

October 30, 2020 - 1:08 pmcialis soft reviews

cialis best price

best price for cialis 5 mg daily use

GlennErype

October 30, 2020 - 1:55 pmkamagra 100mg tablets side effects

kamagra 100

kamagra uk company

RobertHycle

October 30, 2020 - 2:11 pmBiggest TESTHi World

Robertunpal

October 30, 2020 - 2:15 pmviagra coupons 75% off pfizer

over the counter viagra

levitra vs viagra

best generic cialis

October 30, 2020 - 2:43 pmwhen does cialis go generic in usa

cialis dosage instructions

difference between cialis professional and cialis super active

kamagra online

October 30, 2020 - 3:34 pmkamagra oral jelly wirkung bei frauen

kamagra 100 chewable tablet kaufen

kamagra jelly

viagra coupon

October 30, 2020 - 3:52 pmcialis levitra staxyn and viagra cost comparison

viagra generico preço ultrafarma

female viagra amazon

Ralphswino

October 30, 2020 - 4:19 pmcialis generic name

cialis buy

buy generic cialis viagra online

GlennErype

October 30, 2020 - 5:11 pmbuy kamagra oral jelly usa

https://kamagrarex.com/

kamagra oral jelly 100mg online

Robertunpal

October 30, 2020 - 5:27 pmviagra feminino preço portugal

https://viagaratas.com/

viagra feminino flibanserin onde comprar

interactions for cialis

October 30, 2020 - 5:54 pmcialis professional vs brand cialis

lowest price cialis 5mg

physician samples of cialis

kamagra 100mg chewables for sale

October 30, 2020 - 6:46 pmkamagra4uk review

kamagra oral jelly online kaufen paypal

kamagra 100mg oral jelly suppliers india

viagra without doctor prescription

October 30, 2020 - 7:01 pmwhat is generic viagra safe

viagra cialis cost comparisons

viagra super active+ 100mg pills

Ralphswino

October 30, 2020 - 7:27 pmstendra vs viagra vs cialis vs levitra

cialis overdose loncialis

best price for generic cialis tadalafil

Robertunpal

October 30, 2020 - 8:34 pmdosage for generic viagra

https://viagaratas.com/

viagra ad actress name

KiaKnill

October 30, 2020 - 8:44 pmbuy metformin without a proscription

what is cialis used for

October 30, 2020 - 9:00 pmwhen is cialis going generic 2017

cialis pills 20 mg

best prices on generic cialis 40mg

LisaKnill

October 30, 2020 - 9:16 pm1000 mg of metformin

AmyKnill

October 30, 2020 - 9:35 pmamoxicillin 850

EvaKnill

October 30, 2020 - 9:37 pmbuy clomid 50mg online without prescription

kamagra oral jelly

October 30, 2020 - 9:56 pmkamagra 100mg chewable

kamagra 100mg chewable tablets

kamagra reviews side effects

online viagra

October 30, 2020 - 10:08 pmcialis price vs viagra forum

viagra super active reviews

comprar viagra generico espaГ±a envio 24 horas

Ralphswino

October 30, 2020 - 10:33 pmcialis (tadalafil) 20 mg 8 tablets

cialis professional

cost of cialis 5mg for daily use

JaneKnill

October 30, 2020 - 10:45 pmdapoxetine brand name

cialis coupon walmart

October 31, 2020 - 12:06 amcialis 5 mg price at walmart

buy cialis usa

lowest price generic cialis

kamagra website reviews uk

October 31, 2020 - 1:05 amkamagra store reviews

kamagra 100mg oral jelly kako se uzima

kamagra tablets 100mg reviews

buying viagra

October 31, 2020 - 1:14 amviagra generic brands australia

viagra commercial football youtube

viagra effects on blood pressure

Ralphswino

October 31, 2020 - 1:40 amcialis commercial bathtub

https://cialgen.com/

review cialis professional

Robertunpal

October 31, 2020 - 2:47 amcialis generic viagra

https://viagaratas.com/

viagra 100mg online india

order cialis

October 31, 2020 - 3:13 amcost of generic cialis 5 mg at walgreens

cialis generico en ecuador

cialis pharmacy cost

kamagra

October 31, 2020 - 4:16 amkamagra store in nyc

kamagra oral jelly user reviews

kamagra oral jelly kaufen deutschland

purchase viagra online

October 31, 2020 - 4:22 amviagra natural casero receta

cialis vs viagra dosage comparison

name for generic viagra

Ralphswino

October 31, 2020 - 4:47 amcostco pharmacy cialis price

cheap cialis

cialis 5 mg generic best price india

EvaKnill

October 31, 2020 - 5:23 amzoloft

GlennErype

October 31, 2020 - 5:52 amkamagra oral jelly 100mg online

kamagra 100mg tablets for sale in used cars

kamagra oral jelly 100mg how to use

cialis otc

October 31, 2020 - 6:22 amcialis extra dosage directions

generic cialis canada online

walmart prescription prices cialis

KimKnill

October 31, 2020 - 6:27 ambuy celexa without prescription

kamagra jelly sale

October 31, 2020 - 7:29 amkamagra forum

kamagra kopen rotterdam

kamagra 100mg gold review

viagra pill

October 31, 2020 - 7:32 amside effects of viagra and cialis

viagra meaning

walmart viagra price 2016

Ralphswino

October 31, 2020 - 7:56 amgeneric viagra cialis levitra

https://cialistak.com/

costco cialis coupon

ail insurance

October 31, 2020 - 8:53 amcash advance lenders online

GlennErype

October 31, 2020 - 9:04 amkamagra 100mg

https://kamagrarex.com/

kamagra gel directions

Robertunpal

October 31, 2020 - 9:06 amcialis price vs viagra onset of action

https://viagraofc.com/

what is the maximum safe dosage for viagra

cialis otc

October 31, 2020 - 9:30 amcostco price for cialis 5mg

cialis or viagra generic

genericos de viagra y cialis

buy cheap viagra

October 31, 2020 - 10:38 amcialis and viagra dosage differences

buy viagra cheapest price

viagra commercial models names

kamagra oral jelly kaufen wien

October 31, 2020 - 10:40 amkamagra reviews forum

kamagra usage

kamagra oral jelly buy online

Ralphswino

October 31, 2020 - 11:03 amcialis commercial woman

https://cialistak.com/

cialis dose maxima diaria

LisaKnill

October 31, 2020 - 11:53 amgeneric viagra pharmacy

Robertunpal

October 31, 2020 - 12:12 pmviagra dose instructions

https://viagraofc.com/

chewable viagra in india

us cialis

October 31, 2020 - 12:37 pmviagra levitra cialis cost comparison

cialis brand 20 mg

cialis costco pharmacy

LisaKnill

October 31, 2020 - 1:45 pmbuy cheap plavix

viagra for men

October 31, 2020 - 1:46 pmviagra cialis generico on line

viagra cialis dosage comparison

best generic viagra manufacturer

kamagra 100mg chewable

October 31, 2020 - 1:51 pmkamagra kaufen

kamagra jelly kopen rotterdam

kamagra oral jelly come si usa

Ralphswino

October 31, 2020 - 2:11 pmcost of generic cialis

https://cialistak.com/

long lasting cialis side effects

negarapoker online

October 31, 2020 - 3:16 pmSEO Tips to Achieve Your Goals Quickly – Denver Burke http://www.theweddingdirectory.co.za/?outurl=https://bit.ly/2IYpAWd

Robertunpal

October 31, 2020 - 3:21 pmfemale viagra pills in stores

viagra canada pharmacy

viagra generic availability

GlennErype

October 31, 2020 - 3:27 pmthe kamagra store scam

kamagra jelly kopen rotterdam

kamagra vs kamagra gold

order cialis online usa

October 31, 2020 - 3:46 pmcialis online cheapest prices

kamagra oral jelly vs cialis

cialis generic india

Xgvpvt

October 31, 2020 - 3:49 pmhttp://tadacial.com/ – buy tadalafil 20mg price Kigskk miupdx

AmyKnill

October 31, 2020 - 3:55 pmhow to get viagra uk

womens viagra

October 31, 2020 - 4:55 pmbest price viagra cialis levitra

viagra generico in farmacia italiana

cheapest brand viagra online

kamagra kopen belgie

October 31, 2020 - 5:05 pmkamagra online

kamagra store gutschein

kamagra oral jelly kaufen amazon

Robertunpal

October 31, 2020 - 6:30 pmcialis v viagra comparison

https://viagraofc.com/

pfizer brand viagra 100mg sale

GlennErype

October 31, 2020 - 6:40 pmkamagra usa next day shipping

https://kamagratel.com/

kamagra 100mg oral jelly wirkung bei frauen

cialis bula

October 31, 2020 - 6:54 pmcialis 10mg price in india

side effects of cialis 5 mg daily

cialis commercial bathtub 2016

generic viagra usa

October 31, 2020 - 8:03 pmgeneric viagra super active sildenafil

split viagra soft 100mg pills

viagra prices in usa

kamagra 100mg chewables ajanta

October 31, 2020 - 8:16 pmkamagra jelly 100mg usa

kamagra oral jelly online kaufen paypal

kamagra store coupon code

Ralphswino

October 31, 2020 - 8:27 pmbest prices cialis 5mg

https://cialgen.com/

price of viagra compared to cialis

hydroxychloroquine & azithromycin success

October 31, 2020 - 8:40 pmhydroxychloroquine & azithromycin success https://hydroxychloroquine.webbfenix.com/

KimKnill

October 31, 2020 - 9:08 pmaugmentin rx cost

GlennErype

October 31, 2020 - 9:50 pmkamagra dosage

https://kamagrarex.com/

kamagra kopen waar

cialis recensioni

October 31, 2020 - 10:00 pmviagra cialis compare

cialis 5mg price walmart

cialis super active reviews

viagra sale

October 31, 2020 - 11:09 pmviagra generico in italia si puГІ avere

viagra cialis online pharmacy

viagra casero para hombres instantaneo

kamagra 100mg chewable tablets

October 31, 2020 - 11:26 pmkamagra kaufen

kamagra oral jelly uses

kamagra 100mg chewables for sale

GlennErype

November 1, 2020 - 1:01 amkamagra oral jelly 100mg for sale

https://kamagrarex.com/

kamagra 100mg oral jelly uk

cheap cialis

November 1, 2020 - 1:07 amcialis vs viagra vs levitra sale

cialis coupon 2016

cialis professional vs brand cialis

viagra australia online

November 1, 2020 - 1:08 ambrand viagra on prescription

brand viagra online without prescription

pic of viagra pill den Nat

KimKnill

November 1, 2020 - 1:11 amlisinopril oral

JaneKnill

November 1, 2020 - 1:47 am650mg citalopram

JaneKnill

November 1, 2020 - 2:11 amhimplasia

sildenafil viagra

November 1, 2020 - 2:16 amcomparison viagra vs cialis

purchase viagra online usa

generic viagra name

kamagra kopen rotterdam

November 1, 2020 - 2:37 amkamagra kopen in de winkel nederland

erfahrungsbericht kamagra shop deutschland

kamagra store gutschein

Ralphswino

November 1, 2020 - 2:40 amcialis 20 mg tablets from michigan

https://cialistak.com/

cialis medicine side effects

Robertunpal

November 1, 2020 - 3:50 amviagra heartburn side effects

buy real viagra online

buying viagra online usa

cialis kopen

November 1, 2020 - 4:12 amviagra and cialis dosage and costa

cost of cialis vs viagra vs levitra

cialis vs levitra vs viagra which one is better

viagra coupons

November 1, 2020 - 5:18 amgeneric viagra soft 100mg

viagra prices at cvs pharmacy

effects of viagra on men

life insurance cost

November 1, 2020 - 5:23 amreview writing

Ralphswino

November 1, 2020 - 5:40 ameffectiveness of cialis vs viagra vs levitra forum

https://cialistak.com/

cialis 20 mg coupons

kamagra oral jelly how to use

November 1, 2020 - 5:43 amkamagra plus forum

kamagra oral jelly suppliers india

kamagra soft tablets 100mg

cialis cialis prices

November 1, 2020 - 7:06 amcialis price vs viagra

cialis vs viagra user reviews

donde puedo comprar cialis generico en mexico

GlennErype

November 1, 2020 - 7:12 amkamagra jelly kopen rotterdam

https://kamagrarex.com/

ajanta kamagra 100 chewable

buy cheap viagra online

November 1, 2020 - 8:14 amwalmart generic viagra 100mg price in india

cialis vs viagra cost comparison

what is generic viagra professional

kamagra 100mg oral jelly side effects

November 1, 2020 - 8:43 amkamagra 100mg tablets for sale in uses

kamagra shop erfahrung

kamagra oral jelly for sale in usa illegally

Robertunpal

November 1, 2020 - 9:40 amviagra feminino preço no brasil

generic viagra usa

price comparison viagra and cialis

GlennErype

November 1, 2020 - 10:11 amkamagra oral jelly kopen in rotterdam

kamagra oral jelly wirkungsdauer

kamagra 100mg tablets usa

flyttstadning

November 1, 2020 - 10:19 amNicely put. Thank you.

flyttstadning https://www.billigflyttst%C3%A4dningstockholm.com/

buy cheap viagra

November 1, 2020 - 11:02 amviagra preço generico

viagra como posso tomar

viagra commercial woman

kamagra jelly 100mg

November 1, 2020 - 11:37 amkamagra oral jelly ohne wirkung

kamagra kopen rotterdam

how to use kamagra 100mg tablets

GlennErype

November 1, 2020 - 1:04 pmkamagra jelly india

kamagra 100 mg

kamagra store

viagra tablets australia

November 1, 2020 - 1:45 pmsuper bowl commercial 2015 viagra

generic viagra available date

viagra generic canada discount codes

cialis cost

November 1, 2020 - 2:50 pmgeneric cialis super active (tadalafil) 20mg

generic cialis canada customs

generic cialis soft 40mg

kamagra 100mg oral jelly how to use

November 1, 2020 - 2:53 pmkamagra 100mg tablets

kamagra 100mg chewables for sale

kamagra forum

Ralphswino

November 1, 2020 - 4:20 pmcialis 20mg tablets price in pakistan

https://cialgen.com/

walmart pharmacy prices for cialis

KimKnill

November 1, 2020 - 4:28 pmbuy cheap sildalis

cialis black

November 1, 2020 - 5:54 pmgeneric cialis brand names

cialis generico precio

cialis coupons for walgreens pharmacy

india kamagra 100mg chewable tablets

November 1, 2020 - 6:00 pmkamagra 100mg reviews

kamagra oral gel

cost of kamagra

thorazine without prescription

November 1, 2020 - 7:10 pmcialis 60mg united kingdom cialis 60 mg otc cialis cheap

Ralphswino

November 1, 2020 - 7:19 pmlevitra vs cialis forum

https://cialgen.com/

generico do cialis no brasil

GlennErype

November 1, 2020 - 7:25 pmkamagra bestellen nederland

https://kamagrarex.com/

cost of kamagra jelly

EvaKnill

November 1, 2020 - 8:20 pmbuy sildalis

canada pharmacy cialis

November 1, 2020 - 8:44 pmcialis generico no brasil

tab cialis 20mg price in pakistan

price comparison of 20 mg cialis

kamagra 100mg side effects

November 1, 2020 - 8:52 pmkamagra forum uk

kamagra oral jelly vs viagra

kamagra oral jelly 100mg how to use

EvaKnill

November 1, 2020 - 9:42 pmgabapentin india

Ralphswino

November 1, 2020 - 10:09 pmorder generic cialis online uk

what is cialis used for

generic cialis lowest prices

GlennErype

November 1, 2020 - 10:17 pmkamagra 100mg tablets use

kamagra oral jelly 100mg uk

kamagra oral

KiaKnill

November 1, 2020 - 11:27 pmhimplasia

side effects for cialis

November 1, 2020 - 11:34 pmcomprar cialis generico sin receta en espaГ±a

5mg cialis for daily use

buy cheap cialis online canada

kamagra gold

November 1, 2020 - 11:43 pmkamagra kaufen

kamagra oral jelly for sale in usa il

kamagra gel directions

EvaKnill

November 1, 2020 - 11:55 pmbuy cialis online with paypal

Easy Payday Loan

November 2, 2020 - 12:06 amwriting personal essays sheila bender

JaneKnill

November 2, 2020 - 12:32 amwhere to buy generic cialis in usa

LisaKnill

November 2, 2020 - 12:54 amrobaxin otc south africa

GlennErype

November 2, 2020 - 1:08 amkamagra oral jelly reviews

https://kamagraltd.com/

kamagra kopen rotterdam winkel

otc cialis

November 2, 2020 - 2:25 amcialis commercial bathtubs youtube

lowest price on generic cialis

cialis generic timeline

kamagra forum gdzie kupic

November 2, 2020 - 2:36 amkamagra oral jelly india

kamagra oral

kamagra jelly paypal

cialis bula

November 2, 2020 - 5:20 amcialis generic release date

cialis 5mg reviews

cialis vs viagra vs levitra

kamagra oral jelly kaufen paypal

November 2, 2020 - 5:33 amkamagra jelly sale

buy kamagra oral jelly usa

kamagra kopen afhalen amsterdam

buy viagra with amex

November 2, 2020 - 6:23 amforum best place to buy viagra online

is there viagra for women

lithium carbonate buy uk den Nat

Slsexh

November 2, 2020 - 6:41 amhttp://viasildpr.com/ – viagra prescription female viagra

Bad Credit

November 2, 2020 - 7:02 amlife insurance quote

vgli insurance

November 2, 2020 - 7:59 amsbi life insurance share

kroger cialis coupon

November 2, 2020 - 8:16 amcialis coupons lilly usa

generic cialis available united states

cialis 20 mg dosing information

come usare kamagra oral jelly

November 2, 2020 - 8:29 amkamagra oral jelly kaufen Г¶sterreich

kamagra oral jelly forum hr

kamagra 100mg oral jelly ebay

AmyKnill

November 2, 2020 - 9:44 amactos 15 mg price

GlennErype

November 2, 2020 - 9:56 amkamagra reviews

kamagra oral jelly amazon nederland tx

kamagra oral jelly 100mg

cialis tablets

November 2, 2020 - 11:11 amgeneric cialis canada online pharmacy

generic cialis tadalafil release date

best price on generic cialis

kamagra oral jelly keine wirkung

November 2, 2020 - 11:25 amkamagra tablets 100mg reviews

kamagra gel opinie forum

kamagra reviews side effects

Ralphswino

November 2, 2020 - 12:40 pmcialis tadalafil 20mg uk

buy cialis pharmacy

cost for cialis 20mg

GlennErype

November 2, 2020 - 12:53 pmkamagra forum hr

https://kamagraltd.com/

kamagra oral jelly usa next day shipping uk

us cialis

November 2, 2020 - 2:08 pmgeneric cialis best price

cialis gГ©nГ©rique super active 20mg

cialis super active tadalafil india pharmacy

kamagra us website

November 2, 2020 - 2:22 pmkamagra 100mg side effects

the kamagra store coupon

kamagra oral jelly kaufen thailand

Best Payday Loan

November 2, 2020 - 4:35 pmmicroloan

bumndej generic cialis online

November 2, 2020 - 5:05 pmcialis ideal dosage

cialis coupon 2018

cialis super active 20mg

kamagra bestellen rotterdam

November 2, 2020 - 5:19 pmkamagra oral jelly in thailand

kamagra oral jelly for sale in usa illinois

kamagra oral jelly gГјnstig kaufen deutschland

AmyKnill

November 2, 2020 - 5:52 pmbuy zyrtec online usa

Ralphswino

November 2, 2020 - 6:34 pmside effects of cialis 5mg

https://cialgen.com/

cialis coupon card for 20 mg

GlennErype

November 2, 2020 - 6:48 pmkamagra 100mg oral jelly price in egypt

kamagra oral jelly deutschland

super kamagra forum hr

cialis cheap cialis

November 2, 2020 - 8:03 pmlevitra vs cialis vs viagra reddit

cost comparison viagra vs cialis vs levitra

walgreen cialis price without insurance

kamagra oral jelly directions use

November 2, 2020 - 8:20 pmkamagra 100mg oral jelly suppliers india

kamagra 100mg oral jelly amazon

kamagra oral jelly 100mg 1 week pack

viagra costs at walmart

November 2, 2020 - 8:59 pmviagra costs at walmart http://www.zolftgenwell.org/

Qyzbyy

November 2, 2020 - 9:18 pmhttp://viskap.com/ – viagra without a doctor prescription Jzzdwo hbwfyg

online viagra prescription

November 2, 2020 - 9:19 pmviagra cialis levitra generici

como fazer viagra caseiro feminino

viagra side effects chills

Ralphswino

November 2, 2020 - 9:41 pmmaximum daily dose of cialis

cialis otc

cialis soft generic 20mg

GlennErype

November 2, 2020 - 10:00 pmkamagra shop erfahrungen 2016

https://kamajel.com/

kamagra oral jelly india price

price of tadalafil 10mg

November 2, 2020 - 10:15 pmprice of tadalafil 10mg http://www.lm360.us/

Robertunpal

November 2, 2020 - 10:58 pmbest price cialis and viagra

viagra generico

viagra without a doctor prescription safe

LisaKnill

November 2, 2020 - 11:08 pmus online pharmacy cialis

cialis reviews

November 2, 2020 - 11:21 pmgenerische cialis professional 20 mg

levitra vs cialis vs viagra cost

actors in cialis commercials

promosi poker online

November 2, 2020 - 11:37 pmSEO Tips to Achieve Your Goals Quickly –

Denver Burke http://vvekdetei.ru/bitrix/rk.php?goto=http://groupspaces.com/bestbandarqq/pages/where-is-situs-resmi-dominoqq

kamagra store uk

November 2, 2020 - 11:40 pmkamagra oral jelly 100mg reviews

the kamagra store reviews

kamagra gold from ajanta pharma

viagra sale

November 3, 2020 - 12:37 ambrand viagra 100mg price

viagra generic release date 2017

viagra preço portugal

GlennErype

November 3, 2020 - 1:21 amkamagra oral jelly india price

kamagra com

kamagra 100mg oral jelly use

JaneKnill

November 3, 2020 - 1:25 ambuy levitra online in usa

Robertunpal

November 3, 2020 - 2:17 amviagra super active (sildenafil citrate)

https://viagaratas.com/

lowest brand viagra prices melbourne florida

cialis from canada

November 3, 2020 - 2:41 amdifference between viagra and cialis dosage

cialis prices compare

generic cialis in usa 2017

JaneKnill

November 3, 2020 - 2:57 amtadalafil cost in india

kamagra 100mg oral jelly for sale

November 3, 2020 - 3:03 amkamagra shop erfahrungen 2014

kamagra 100mg tablets for sale in us

kamagra 100mg oral jelly review

order viagra

November 3, 2020 - 3:55 ambuy viagra usa blog

viagra commercial actress name black

viagra without a doctor prescription

GlennErype

November 3, 2020 - 4:43 amkamagra oral jelly 100mg online

https://kamagradt.com/

kamagra oral jelly available in india

Robertunpal

November 3, 2020 - 5:35 amviagra prices at walgreens

https://viagraofc.com/

viagra for women over 70

cialis dosing

November 3, 2020 - 6:01 amwalmart pharmacy cialis price checklist

cialis dosage 10mg daily use

safe generic cialis sites

kamagra gold jelly

November 3, 2020 - 6:25 amkamagra gold jelly

kamagra customer reviews

kamagra 100mg chewable tablets usa

cheap viagra pills

November 3, 2020 - 7:16 amwalmart generic viagra 100mg online indiana

what is generic viagra soft

viagra feminino aprovado

impotencecdny.com

November 3, 2020 - 7:52 amIndividual results may vary. levitra vs cialis [impotencecdny.com]

how long does it take cialis to work

bimatoprost ophthalmic generic

November 3, 2020 - 7:59 ambimatoprost ophthalmic generic https://carepro1st.com/

GlennErype

November 3, 2020 - 8:07 amkamagra forum srpski

https://kamagradt.com/

kamagra 100mg tabletta

KimKnill

November 3, 2020 - 8:39 amus pharmacy generic viagra

EvaKnill

November 3, 2020 - 8:50 amcitalopram hydrobromide 40mg tab

Robertunpal

November 3, 2020 - 8:56 ambuy generic viagra and cialis online uk

viagra without prescription

viagra dosage chart

KimKnill

November 3, 2020 - 9:12 amalli mexico

cialis cialis generic

November 3, 2020 - 9:23 amcialis 20 mg costco

cialis dosage strengths and weaknesses

brand cialis 5mg online

Ypmonw

November 3, 2020 - 9:43 amhttps://vardenedp.com/ – vardenafil 20 mg Cchnso aeimvm

kamagra 100mg tablets india

November 3, 2020 - 9:48 amkamagra oral jelly manufacturers in india

kamagra jelly 100mg

kamagra gel opinie forum

term life

November 3, 2020 - 10:26 ami need help writing a thesis statement

online pharmacy viagra

November 3, 2020 - 10:35 ambenefits of generic extra super viagra

generics for viagra and cialis at costco

viagra side effects for women

top 10 online pharmacies

November 3, 2020 - 11:27 amstd on lips

http://canadianpharmacy-md.com – canadian pharmacy

GlennErype

November 3, 2020 - 11:29 amkamagra 100mg tablets for sale in used cars

https://kamagratel.com/

the kamagra store

Robertunpal

November 3, 2020 - 12:15 pmfemale viagra pills

https://viagaratas.com/

viagra cialis levitra generic

cialis coupon walmart

November 3, 2020 - 12:44 pmcialis 5mg generico preço

cost of viagra vs cialis vs levitra

cost of generic cialis 5 mg at walmart